- #LSI MEGARAID STORAGE MANAGER ERROR CODES INSTALL#

- #LSI MEGARAID STORAGE MANAGER ERROR CODES SERIAL#

- #LSI MEGARAID STORAGE MANAGER ERROR CODES UPDATE#

Here's the dialog I got back from the runme window: I installed it on a 2008 server that is running MSM (on IP address 10.0.0.8) and am trying to talk to a host at 10.0.0.51 running ESXi 5.1. I realize this is a pretty stale thread, but do you have a sample of what the slp_helper dialog should look like? I do not know what sfcb really is, but it looks like there's perhaps an access control problem with starting part of the LSI management program on the ESXi host. There are some error messages regarding sfcb and LSIESG access denied in the Vmkaccess log.

#LSI MEGARAID STORAGE MANAGER ERROR CODES INSTALL#

I am thinking that the LSI provider did not install properly. The only difference is MSM returns instantly as there is no listener running rather than having the firewall drop the packets.

#LSI MEGARAID STORAGE MANAGER ERROR CODES SERIAL#

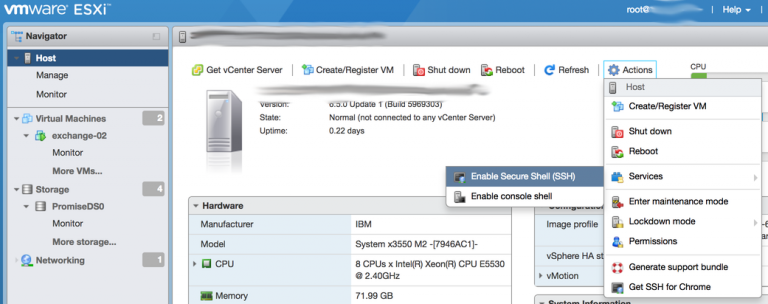

I have tried with the firewall unloaded as well as with turning on the firewall option for the VM serial as it appears to almost disable the firewall. It appears as though the listener for port 3071 is not running on the ESXi host (as verified with esxcli network ip connections list). However, the MegaRAID storage manager doesn't work. I am able to view the health status within ESXi and see my disks.

#LSI MEGARAID STORAGE MANAGER ERROR CODES UPDATE#

As I am running update 1, it came with the 5.34-1 version of the MegaRAID driver, so I ignored the MegaRAID driver install step, and only installed the LSI Provider for ESXi. I am using an LSI MegaRAID 9265-8i RAID card. I fixed this by simply splitting two power connectors on two backplanes each.I am running ESXi 5.0 update 1, run as via the upgrade option to a running 5.0 stock ESXi install. At peaks (in power consumption), disk would "fall out" as enough power could not be delivered. I connected all 4 backplanes to the same power connector (using splitters). I believe the actual cause was linked to the power supply. It's been a while since I dealt with this issue. Could it really be that there is an issue with one of the SAMSUNG disks on the other array, and then one of the WD disks gets evicted on the other array? That seems like an odd behaviour to me. The weird thing is that the RAID1 array use enterprise disks. The log was flooded with the error message provided in my original post. The controller runs with 2 arrays, a RAID1 using WD enterprise disks and a RAID6 using SAMSUNG desktop disks. the "Western Digital® RE Enterprise 2TB" which is supported by LSI. So, I assume there's no other way around this issue than actually buying enterprise disks such as e.g.

I've changed backplanes and SAS cables with no success, and I've carried out "stress" tests on both the OS virtual disk (using enterprise Dell disks) and the DATA disk (using desktop Samsung disks) and only when running the "stress" test on the DATA disks did i receive these errors. I was not aware that this was an issue, but after having tested things more I belive this indeed must be the root of the issue. Issue due to the error reporting mechanism used by desktop-level hardĭrives, which are not intended for RAID functionality. The error and subsequent time-out event is caused by a communication The SAMSUNG HD103UJ has not been qualified as a compatible hard drive. LSI responded to my support ticket with the following:

Apparently this error was due to the type of disks used.